Be Engaged

The data on AI and the future of work is genuinely uncertain. However, our response to it does not have to be.

…back to our regularly scheduled programming (the last essay was a little different)…

A few weeks ago, a friend of mine (who is an executive coach to tech CEOs and fund managers) described a conversation he had with his dermatologist. Not a venture capitalist. Not a software engineer. Not someone who doom-scrolls tech Twitter for sport. Instead, it was an accomplished, mid-career, probably mid-forties dermatologist. The kind of professional whose credentials represent exactly what a stable, well-built life in America is supposed to look like.

And this doctor was visibly shaken. He told my friend he might need to start thinking about a new career.

This anecdote confirmed something I had been sensing for a while. The anxiety around AI is no longer contained to founders, early-stage investors, or the chronically online. It has bled into the bloodstream. It is sitting in dermatology waiting rooms, at dinner tables, in text threads between old college friends, trying to figure out what the next ten years of their careers actually look like.

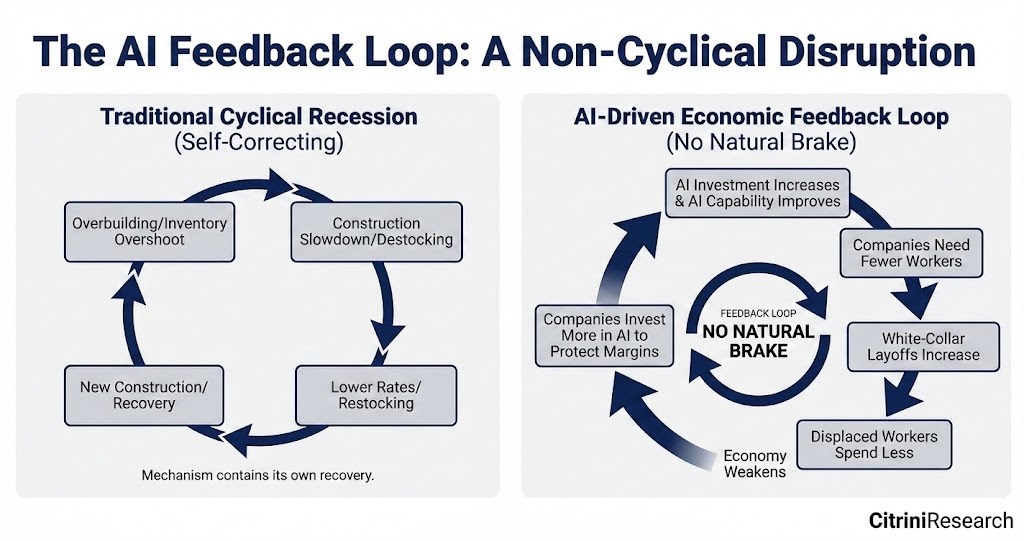

And you can understand why. Over the past couple of years, a very specific narrative has been on repeat. AI will replace knowledge work. One person will build and operate a billion-dollar company. And then data continues to support the emerging story, with companies like Block announcing it is cutting 40% of its workforce, citing AI. Then, a research firm called Citrini publishes a thought exercise written as a dispatch from June 2028, framed as a macro memo from the future describing a full-blown Global Intelligence Crisis. Unemployment at 10.2%, with the S&P down 38%, and a vicious economic downturn feedback loop with no natural brake. It was explicitly labeled a scenario, not a prediction. But the markets sold off anyway, and people screenshot the headlines and started treating a mental exercise as a prophecy.

We live in a hyperbolic culture right now. Everything is breaking news. Everything is existential. And we have been trained, particularly over the last several years, to react faster than we reason. The Citrini piece is genuinely worth reading because it is a serious attempt to model a left-tail risk and think through the second and third-order consequences of AI disruption hitting the real economy. But confusing a stress test with a forecast is a category error. And a lot of people are making it.

So I did what I do, what any trained equity research analyst would do. I read broadly. I took notes. I talked to people. And what I found was not a clean answer, because there is not one. What I found was a much more useful picture than either the doomers or the optimists want to admit.

Greg Ip, the chief economics commentator at the Wall Street Journal, pushed back directly on the apocalyptic case. His argument is grounded in history. Technology has always displaced some jobs. But it also lowers costs, raises productivity, creates demand, and generates new roles. The offsets are consistent enough that, across the sweep of American history, technological change has not raised unemployment for the economy as a whole. He points out that software developer jobs are actually up five percent year over year as of early 2026. Ip also mentioned that the number of accountants and financial analysts rose when Lotus 1-2-3 and Excel arrived, even though everyone predicted spreadsheet software would eliminate those roles. Another example mentioned in the article was that since Google Translate launched in 2006, the number of human translators employed in the United States has risen by 73 percent. Ip’s argument is not that AI cannot disrupt; it clearly can and will. He argues that a sector disruption becoming an economy-wide catastrophe requires a breakdown in how the market economy functions, and there is no evidence of that happening right now.

The Economist offered a different kind of calibration. Their piece, titled “The AI Productivity Boom Is Not Here Yet,” points out something that gets lost in both the fear and the hype. The tools are improving fast. The adoption is real and rising. But the macro data has not moved in any dramatic way. Roughly 41 percent of American workers used generative AI at work by late 2025, up from 31 percent a year earlier. But only about 13 percent use it every day. AI accounts for less than six percent of total work hours. And when you strip out the enormous investment surge in data center infrastructure, underlying productivity gains are close to zero. The article draws on a historical parallel worth sitting with: when factories replaced steam engines with electric motors, productivity barely moved at first. The real gains came decades later, once the factories were physically redesigned around the new reality. We are still in the early reorganization phase of AI. The technology is here. The rewiring has barely begun.

What I take from reading these three pieces together is: things do not change as fast as we fear in a year, but they change faster than we expect in five or ten.

The Citrini scenario is not a prediction. It is a warning about what an unmanaged transition could look like if we get complacent. The Ip piece is not a reason to relax. It is a reason to stop catastrophizing and start thinking clearly. The Economist piece is not reassuring. It is a reminder that the real impact of a new technology is felt when organizations and workflows are redesigned around it, and that phase is coming.

Here is what I think gets lost in most of this discourse. The macro debate is interesting. But it is not actually the question most people I know are wrestling with. The question they are wrestling with is much more personal. It sounds like: what do I do with all of this? Am I going to be okay? Do I have any agency here, or is this just happening to me?

And I see two failure modes among people I respect. The first is the person who dismisses AI entirely. They are not paying attention, not experimenting, not updating their approach, and are going to get quietly outcompeted by someone who is. The second is the person who has convinced themselves that none of their skills matter anymore, that the tools will outpace them no matter what, and who has essentially decided in advance that they have already lost. That person is also going to struggle, but for a different reason. They have surrendered agency before the game is even over. Actually, we are still in the first quarter.

Both of those postures are wrong. And they share a common flaw: they are passive.

I keep thinking about language around fear. I am being careful here because I do not want to overstate it, but some theologians argue that what is often translated as fear of God is, in the original Hebrew and Greek, closer to reverence. Not panic. Not paralysis. Not the feeling that something is about to crush you. A recognition of something larger than yourself, and a call to orient wisely in response to it. That is a much better posture for AI than either dismissal or dread. Respect it. Learn it. Use it. Do not be naive about what it can do. But do not surrender to it either.

The thing I notice when I actually use these tools is that they do not replace what is most native to how I work. I am a connector. I connect people, ideas, capital, and patterns. AI does not disrupt that. It helps me do more of it, faster, and sometimes more clearly. It lets me spend less time on the blank page and more time shaping what is actually mine to say. The question is not whether AI can do more cognitive tasks. It clearly can. The question is whether you use that as a reason to outsource your thinking, or as a reason to deepen the parts of your work that are genuinely yours.

What I want for the people in my network, particularly my cohort in that 35 to 45 range who are mid-career, who built their expertise in a world that rewarded knowing things, is for them to understand that the old premium on simply possessing information is narrowing. The new premium is on what you do with it. On taste. On judgment. On trust. On the ability to read a room, persuade a human, understand context, and make meaning out of chaos. Those things are not going away. But they need to be activated, not dormant.

No five-step plan. Just one thing.

The piece I found most useful, practically, was not the macro analysis at all. It was a letter that Jim VandeHei, the CEO of Axios, wrote to his wife and kids. His core message is simple: you are living history. Do not ignore it. Use AI with curiosity, discernment, and clear eyes. He told his kids that any knowledge work that does not require true expertise or a critical human connection is at real risk. He said treat the tools like the smartest person you have ever met, but remember they are imperfect, probably like the smartest person you know today. And he ended with a call to action that really resonated with me: There is no hero riding to the rescue. You be the hero.

I want to say something like that to my generation. Not to his kids, but to the adults I came up with who are building companies, raising families, managing teams, and trying to figure out what the next two decades of their careers look like. The letter he wrote was for people starting out. But the message applies to all of us.

You did not ask for this moment. It is here anyway. The data is genuinely uncertain about how fast this will move and how wide the disruption will be. Reasonable economists disagree. History offers useful but imperfect analogies. The full productivity impact has not yet shown up in the macro numbers. There is real time to orient. And yes, black swan events could happen more and more.

But the right response to uncertainty is not paralysis. It is engagement.

Figure out your superpower. It’s there. I promise. What is the thing that, when you are doing it, you feel most like yourself and most useful to other people? What is the topic someone could call you about on a random Tuesday with no prep, and you would have something really helpful to say? That is what you protect. That is what you build around. That is what AI should amplify, not hollow out.

Experiment with the tools. Not performatively, but genuinely. Find out what they are good at and where they fall short. Use them to go faster at the things that already come naturally to you. Do not use them as a substitute for thinking. Use them as a pressure test for your ideas.

And maybe most importantly: do not mistake reading about AI for engaging with AI. The discourse is not the same as the practice. One of them will make you more anxious. The other will make you more capable.

The Citrini piece that started the recent anxiety attacks on the market and group text threads even ended with a bit of realist optimism similar to VanDeHei's advice, reminding us all that “We are reading this in March 2026, not June 2028…The canary is still alive.” The feedback loops described in the Citrini piece have not started. The reorganization The Economist describes has barely begun. There is still time to shape what this transition looks like, not just for yourself, but for the teams you lead, the companies you build, and the family, friends, and people coming up behind you.

So be engaged.

With gratitude AND agency,

Earnest

Articles referenced in this essay:

Citrini Research: The 2028 Global Intelligence Crisis

Wall Street Journal (Greg Ip): Tech Has Never Caused a Job Apocalypse. Don’t Bet on It Now.

The Economist: The AI Productivity Boom Is Not Here Yet

Axios (Jim VandeHei): Blunt AI Talk in Letter to His Kids